INTRODUCTION

TwinMind is an AI app that transcribes audio and creates summaries. Users go to the chat feature to ask questions about live and past captures.

My Role

I owned the end-to-end chat redesign for mobile and handed off to dev. I partnered with Engineering to scope the MVP and iterate using post-launch feedback.

Team

OVERVIEW

AT A GLANCE

Redesigned the chat flow to be more reliable through thinking tokens, clear context and error + recovery states

BEFORE + AFTER REDESIGN

The new chat reduced perceived latency by at least 10-15s

Before

After

PROBLEMS

Users couldn’t predict how the chat would respond or what context it was using

To build trust in AI, users need visible signals of progress, context, and grounding, not hidden controls or opaque loading states.

Confusing UI for key points like model selector

Opaque loading states increasing streaming time

Thinking takes too much space in the response

POST-ROLLOUT

Figuring out how to show the context was key in differentiating from other generic AI-tools

POST-ROLLOUT

Post-launch feedback revealed confusion around model selection and chat context

CORE FLOWS

Model + context selector

CORE FLOWS

Context + Thinking tokens

CORE FLOWS

Error + recovery states

CORE FLOWS

Usage in the rest of the app

REFLECTION

Coming up with 25+ common user questions and the tools that it should use

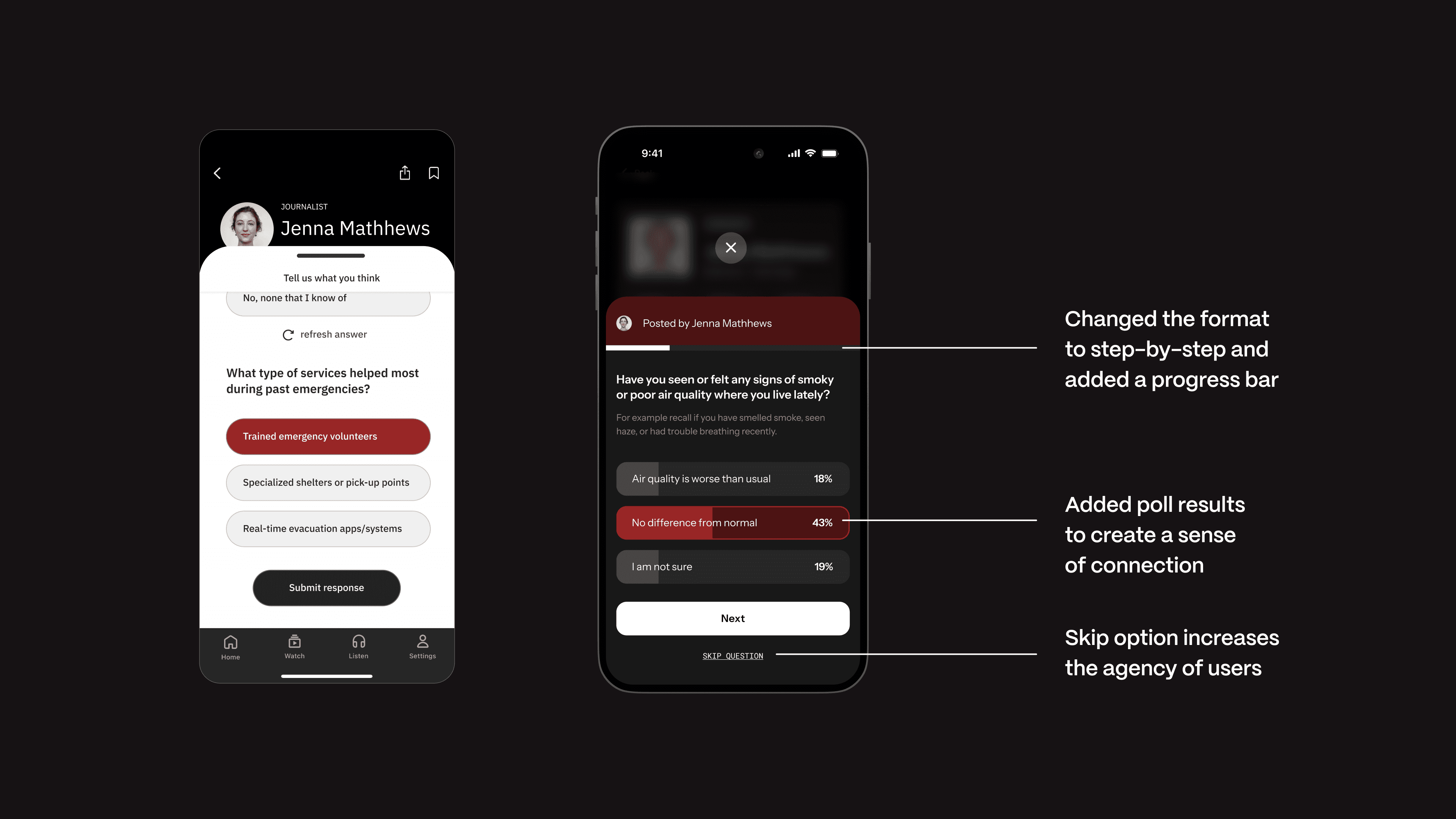

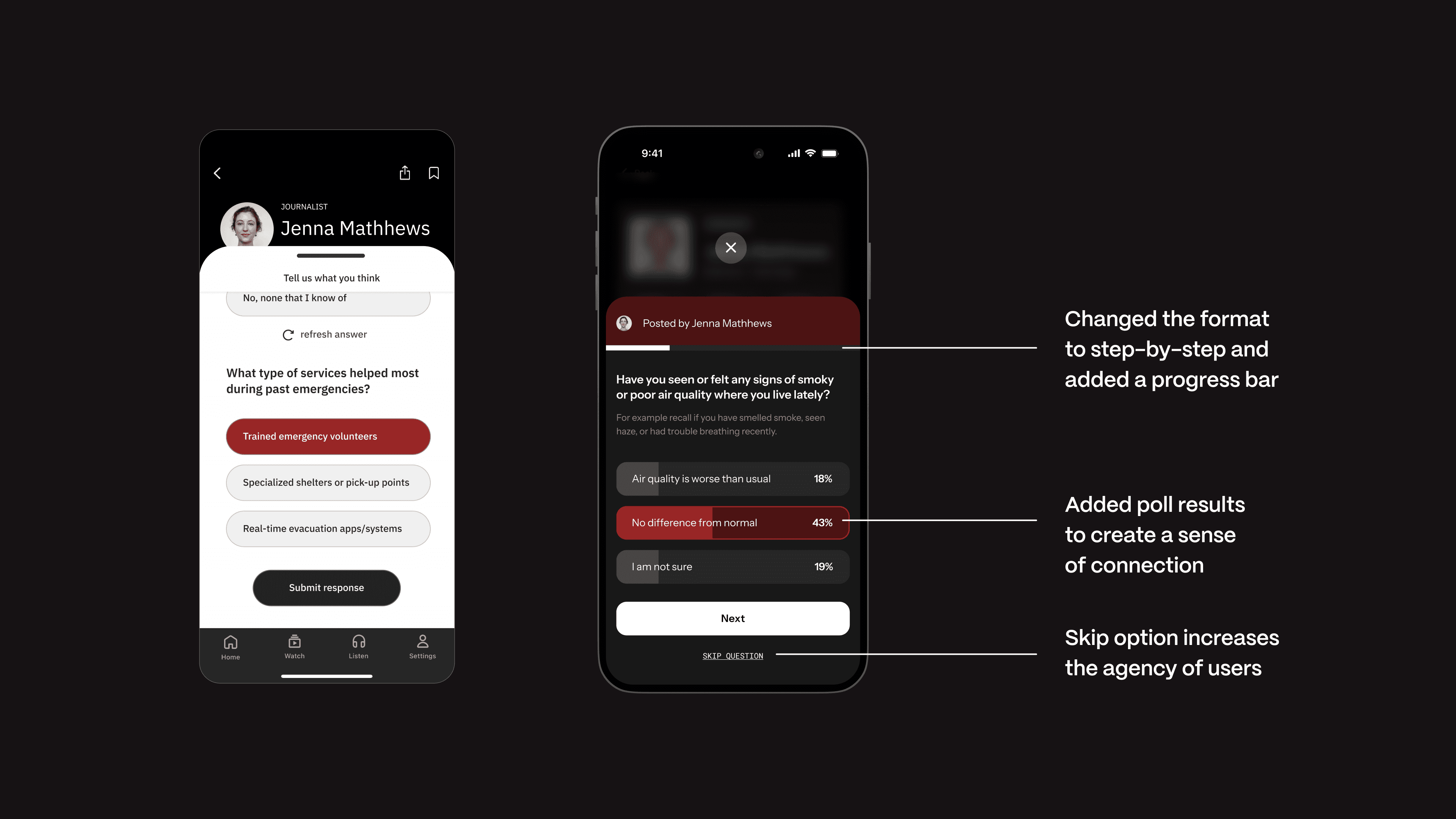

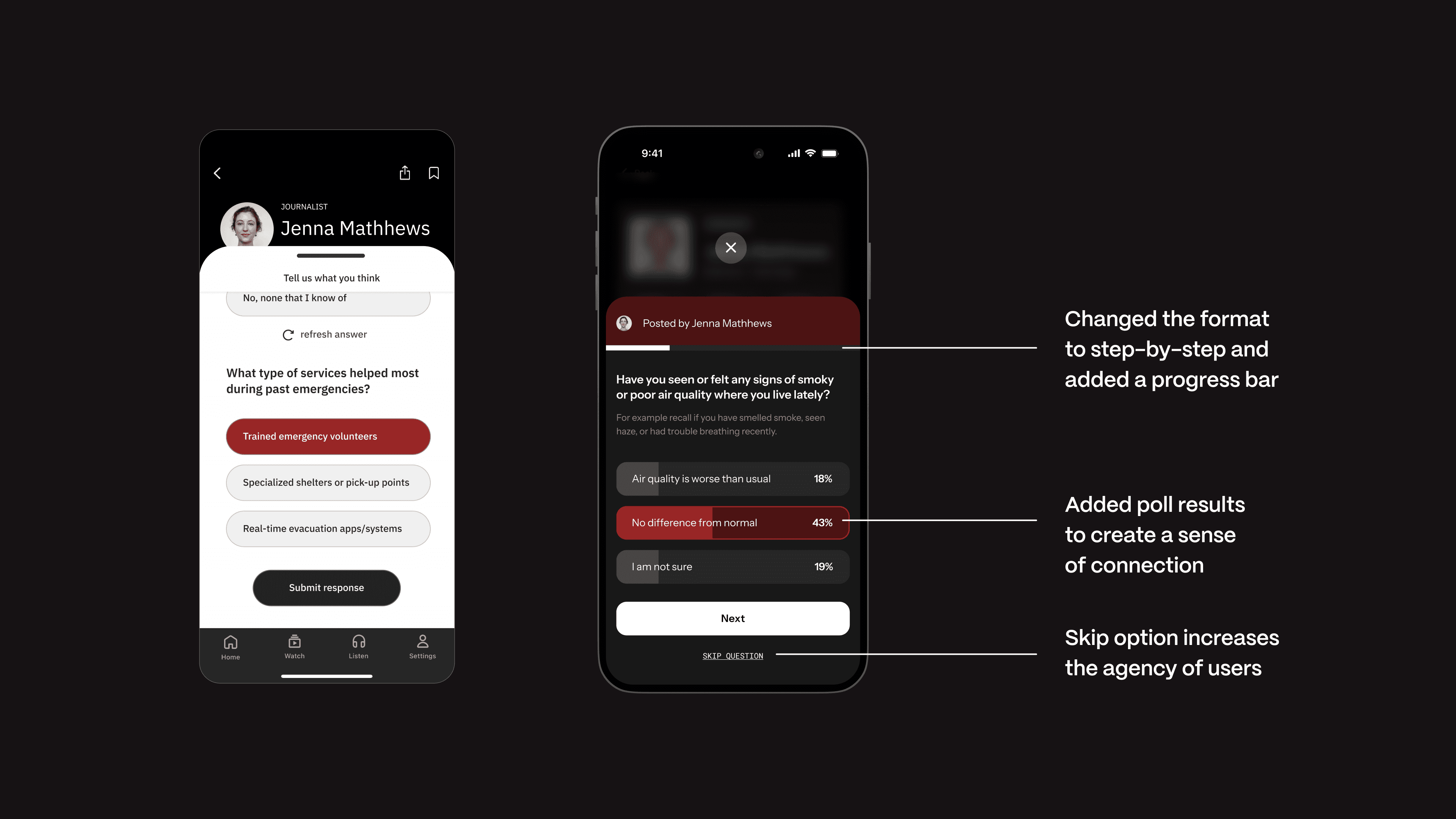

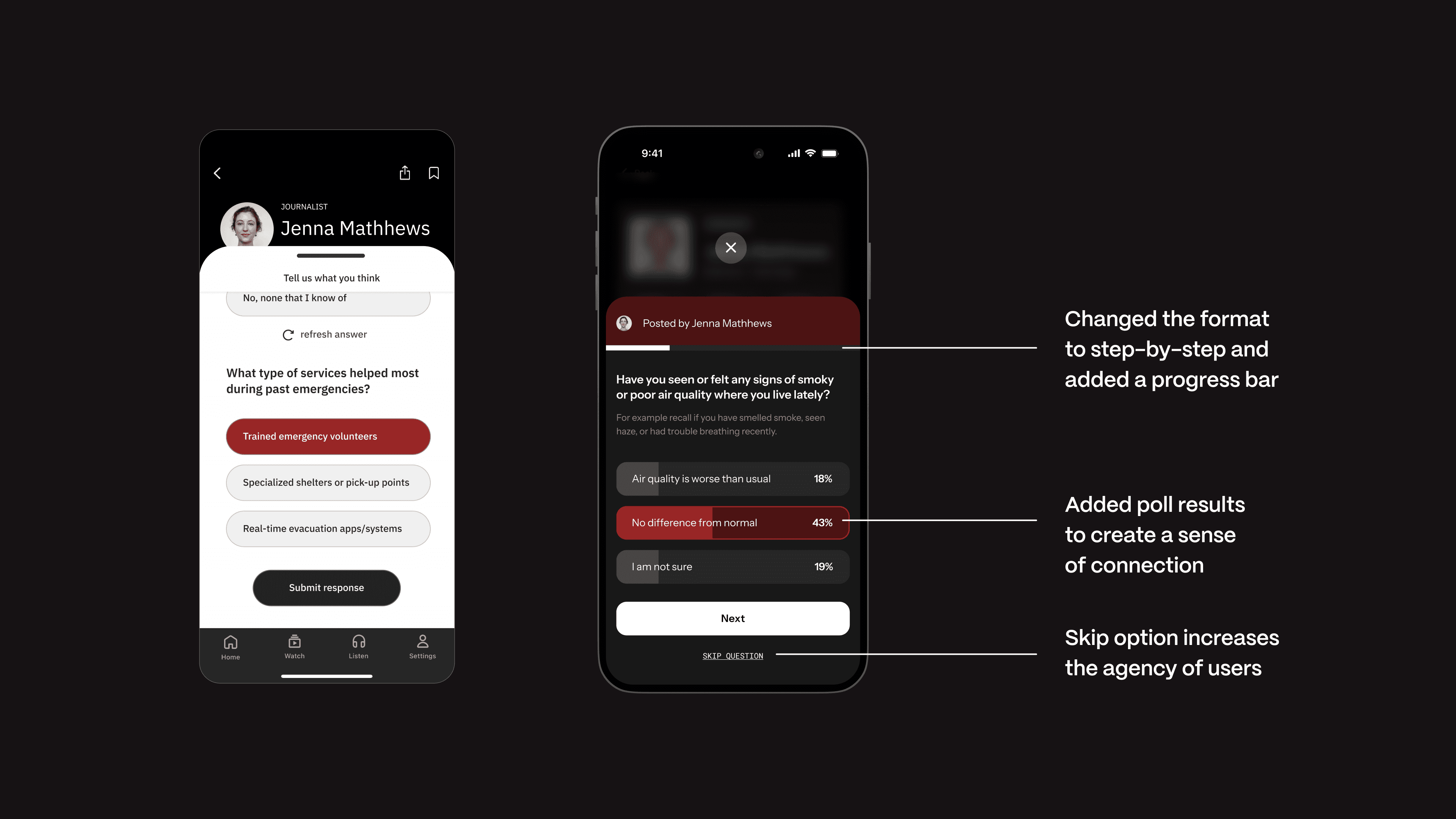

Rather than inventing new behaviors, I looked at tools journalists and audiences already trust—editorial review systems, creator platforms, and lightweight interaction patterns like polls and inline feedback.

Writing guidelines for response formatting

The natural next step is to design where journalists decide what to ask, when to ask it, and how to interpret responses. This would include:

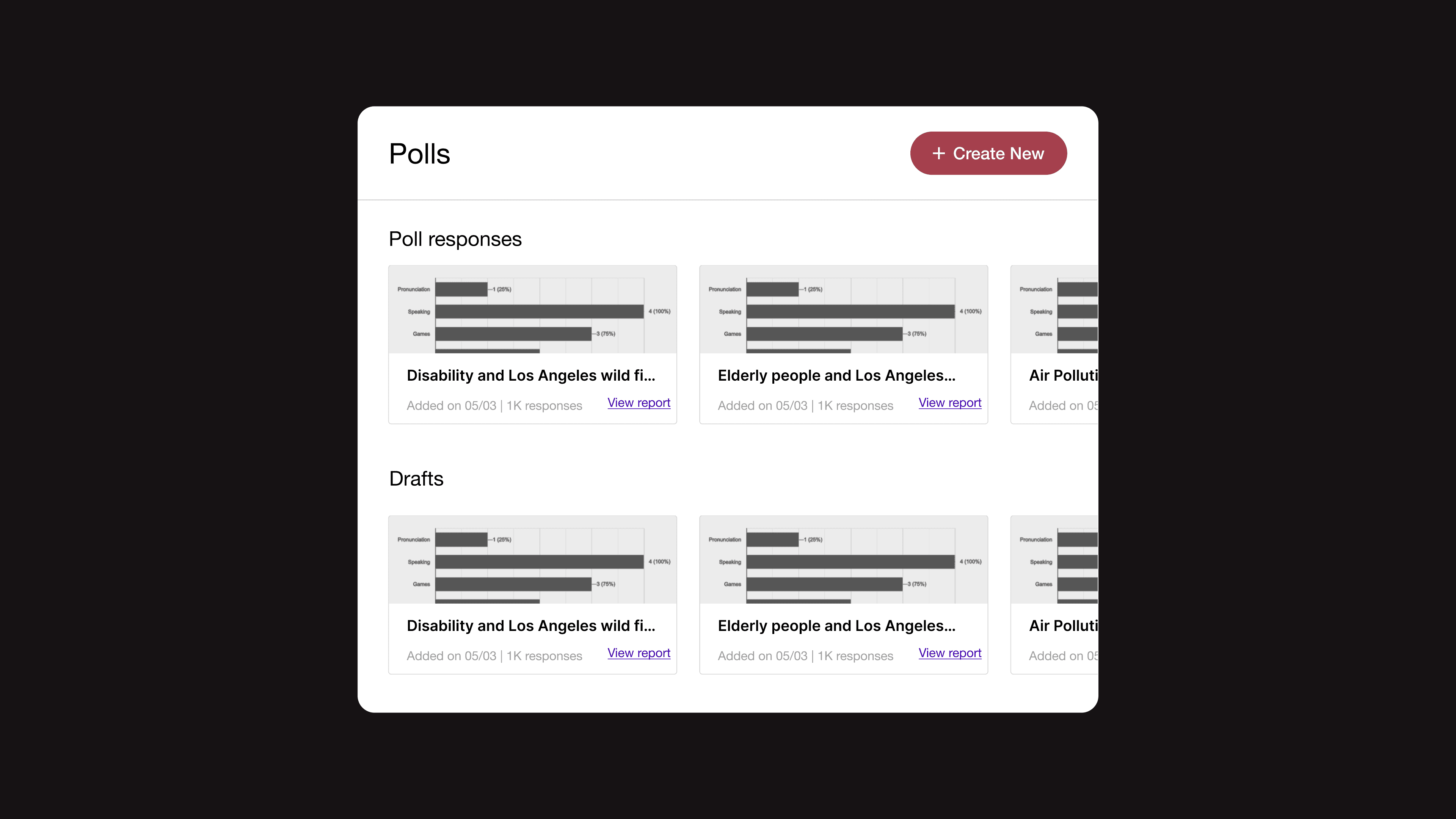

A guided poll creation flow that helps journalists frame unbiased, clear questions

Visibility into audience confidence, sample size, and response quality

Tools to translate poll insights into follow-up reporting